The Semantic Layer in 2026: Why Data Teams Are Rebuilding Their Stack Around Metric Definitions

Last updated: April 2026

The marketing director says revenue grew 14 percent last quarter. The CFO says it grew 11. The product team’s dashboard shows 12. Nobody is lying. They are pulling from the same warehouse, in some cases the same tables. They are simply applying different definitions of “revenue” — one includes refunds, one excludes them, one treats deferred revenue on a cash basis, one on an accrual basis. Every meeting that touches the number opens with five minutes of definitional archaeology before anyone can talk about the actual business.

This is the problem the semantic layer was invented to solve, and it is the reason the category has gone from a niche concern to one of the most active areas of investment in the modern data stack. Over the last eighteen months, every major warehouse vendor has shipped or acquired semantic capabilities, an open specification has been ratified by a coalition of the largest players in the industry, and “semantic layer” has moved from a line item in architecture diagrams to a core decision that shapes how data teams build everything downstream of it.

This guide walks through what a semantic layer actually is, why the conversation has accelerated so dramatically in 2026, what the realistic implementation paths look like for data teams of different sizes, and where the technology is heading next.

What a semantic layer actually does

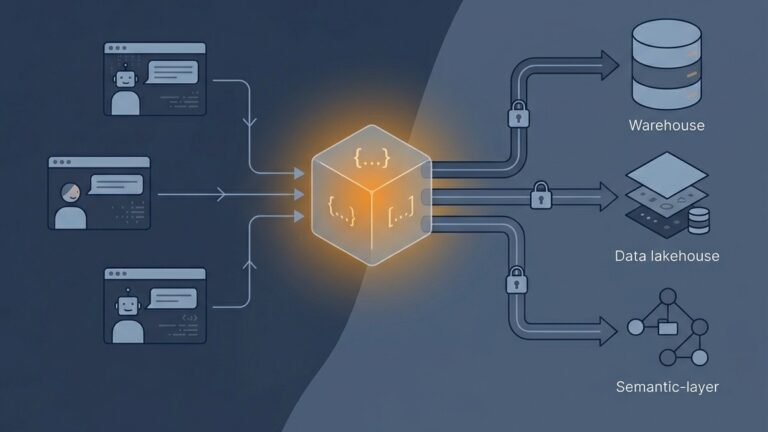

A semantic layer is a translation system. It sits between your transformed data tables and the people, dashboards, APIs, and AI agents that query them. Its job is to take business concepts — revenue, active customer, churn rate, MRR — and define them once, in code, in a single place that every downstream consumer must respect.

Without a semantic layer, those definitions live wherever someone happened to write them. A measure in a Looker model. A SQL CASE statement in a Mode notebook. A calculated field in Tableau. A formula in a Google Sheet that someone exported in 2023 and has been updating manually ever since. Each definition drifts independently. Every dashboard becomes its own source of truth, which is the same as saying nothing is.

With a semantic layer, the definition lives in a versioned, reviewed, testable file. When the finance team decides that revenue should now exclude a specific class of promotional credits, the change is made in one place. Every dashboard, every API call, every AI query that touches “revenue” inherits the new definition automatically. dbt Labs describes this as a hub-and-spoke architecture for metric definitions, and the metaphor is precise: one centralized hub, many consuming spokes, none of them allowed to redefine the metric on their own.

The technical implementation varies. Some semantic layers are YAML-defined and live alongside transformation code (dbt’s MetricFlow, Cube). Some are SQL-defined and embedded in a BI platform (LookML, Holistics AQL). Some are warehouse-native (Snowflake Semantic Views, Databricks Metric Views). The architectural pattern is consistent across all of them: define once, query everywhere, propagate changes automatically.

Why the conversation accelerated in 2026

For roughly a decade, the semantic layer was a feature people argued about but few teams actually invested in. The classic OLAP cubes of the 2000s had given the concept a slightly dated reputation, and most data teams treated metric consistency as a process problem to be solved with documentation and discipline. The argument was that if everyone agreed on what revenue meant, you did not need infrastructure to enforce it.

That position became untenable for two reasons, and both of them came to a head in late 2025 and early 2026.

The first reason is AI. When humans write SQL or build dashboards, they bring context with them — they know that the orders table excludes test transactions, that the customer_status field has three legacy values that should be remapped, that the fiscal calendar starts in February. AI agents do not bring that context. They read the schema and confidently produce queries that are technically correct and semantically wrong. Atlan’s analysis of how semantic layers function as the context layer for AI makes the case directly: without a governed source of business definitions, agentic analytics systems hallucinate metrics or return inconsistent answers depending on which model handled the request. Gartner has called a universal semantic layer a non-negotiable foundation for organizations deploying AI in 2026, framing it as the only credible way to manage AI debt and stop inconsistencies from spreading across multi-agent systems. As LLM-powered analytics moved from demo to production, the cost of inconsistent semantics moved from “annoying” to “actively dangerous.”

The second reason is the Open Semantic Interchange specification. OSI is a vendor-neutral standard for representing semantic layer constructs — datasets, metrics, dimensions, relationships, and context — in a format that can be exchanged between platforms. The v1.0 specification was published on January 27, 2026, under an Apache 2.0 license, with founding contributions from Snowflake, dbt Labs, Salesforce, Databricks, ThoughtSpot, Cube, Atlan, and roughly two dozen other vendors. dbt Labs simultaneously open-sourced MetricFlow under the same license, removing the prior commercial restrictions on the engine that powers dbt’s semantic layer.

Before OSI, choosing a semantic layer meant choosing a vendor. Definitions written in LookML stayed in Looker. Definitions written in Cube schemas stayed in Cube. The migration cost between platforms was high enough that “semantic layer” effectively meant “permanent commitment to one BI tool.” OSI is an attempt to make those definitions portable. Whether it succeeds will depend on adoption — Microsoft, SAP, Oracle, and IBM are all conspicuously absent from the founding partners — but the existence of a credible open standard has changed how teams evaluate semantic infrastructure decisions made today.

The implementation paths data teams actually take

There is no universal correct answer to “which semantic layer should we use,” but the realistic options cluster into four patterns, each suited to a different starting point.

Warehouse-native semantic layers

Snowflake Semantic Views and Databricks Metric Views embed the semantic layer directly in the data warehouse. Definitions live as first-class catalog objects alongside tables and views. This appeals to organizations that want the fewest possible moving parts and that are committed to a single warehouse for the foreseeable future.

The advantage is operational simplicity. There is no separate service to deploy, no additional billing relationship, no compatibility matrix to maintain between the semantic layer and the underlying compute. The disadvantage is portability. Snowflake Semantic Views only function within Snowflake. If your organization queries data outside the warehouse — through a reverse ETL flow, an embedded analytics product, or a multi-cloud strategy — the warehouse-native option leaves part of your stack without semantic coverage.

Transformation-coupled semantic layers (dbt)

The dbt Semantic Layer, powered by MetricFlow, defines metrics in YAML files that live alongside dbt models in version control. The architectural premise is that semantic definitions are part of the same artifact that produces the data: the model that builds the orders table is reviewed in the same pull request as the metric definition that aggregates it.

This is the dominant choice for teams that already use dbt as their transformation tool, which is most of the market. The CI/CD workflow is mature, metric changes are auditable through git history, and the integration with downstream BI tools and AI applications has expanded steadily through 2025 and 2026. The trade-off is that the semantic layer’s capabilities are coupled to dbt’s release cadence and dbt Cloud’s pricing for production semantic queries.

BI-embedded semantic layers (LookML, Power BI semantic models, Tableau Semantics)

Looker pioneered the BI-native semantic layer with LookML, and the category has matured significantly as Power BI and Tableau have added their own equivalents. The strength is tight integration with visualization: the semantic model and the dashboards consuming it live in the same environment, with no API calls or compatibility layers between them.

The weakness is what happens when you outgrow the BI tool. Migrating LookML to a different platform is genuinely difficult, and organizations that built their semantic layer inside Looker before the OSI specification existed are now reckoning with the cost of moving definitions out. Teams making this choice today should treat the BI vendor lock-in as a deliberate decision rather than an architectural accident.

Headless and standalone semantic layers (Cube, AtScale)

Headless semantic layers are designed to sit between the warehouse and any number of consuming applications, exposing metrics through APIs rather than coupling them to a specific BI tool or transformation framework. Cube, which open-sourced its core engine and offers Cube Cloud as a managed service, is the most prominent example. AtScale focuses on enterprise-scale virtualization across multiple warehouses.

This pattern fits organizations building custom analytics applications, embedded analytics products, or multi-tool environments where metrics need to be consumed identically by Tableau dashboards, Streamlit apps, and AI agents. The cost is operational complexity: you are running another service, with its own deployment model, scaling characteristics, and failure modes.

What changes downstream when semantic layers are in place

Implementing a semantic layer is not a discrete project that ends. It changes how the rest of the data stack is built and consumed.

Dashboards become thinner. When metrics are defined in the semantic layer, the BI tool stops being a place where business logic lives and becomes a presentation layer. A dashboard’s job is to choose which metrics to display, which filters to apply, and how to visualize the result. The actual definition of what “revenue” means is no longer the dashboard’s responsibility, which removes a major source of dashboard sprawl.

Self-service analytics becomes more reliable. The common failure mode of self-service tools is that they give business users enough access to query data, but not enough context to query it correctly. A semantic layer constrains the questions a non-technical user can ask to questions the data team has explicitly endorsed. The user gets autonomy on dimensions and filters; the data team retains control of the underlying calculation.

AI integration becomes possible without the hallucination problem. The most common failure mode of natural-language analytics is that the LLM produces a confident answer that uses the wrong metric. With a semantic layer in place, the LLM is constrained to query through governed definitions rather than write SQL directly against the warehouse. This is the architectural pattern behind the more reliable AI analytics implementations shipping today, and it is also why platforms that emphasize statistical rigor — like QuantumLayers, which has written about how statistical preprocessing prevents AI from surfacing spurious patterns — increasingly treat semantic consistency as a prerequisite rather than a nice-to-have.

Statistical analysis becomes meaningful across teams. When marketing’s “active user” and product’s “active user” are the same number, comparing channel acquisition to retention curves stops requiring a reconciliation step. The cohort analysis the growth team runs and the retention analysis the product team runs can be combined without three days of definitional debate.

Where the technology is heading

Three trends are shaping what semantic layers will look like by the end of 2026 and into 2027.

The first is interoperability. OSI’s Phase 2 roadmap targets native support in 50 or more platforms by the end of 2026, along with domain-specific extensions for verticals like finance and healthcare. If that adoption materializes, the choice of semantic engine will become much less consequential, because definitions will move between engines without rewriting.

The second is convergence with data catalogs. Catalogs document what data exists; semantic layers define what it means. The two have historically been separate products, but the integration between them — Atlan, Alation, Collibra, and DataHub all participating in OSI is not coincidental — is closing the gap. The end state is a single governance surface that covers inventory, lineage, and meaning together.

The third is the agentic semantic layer. As AI agents move from single-prompt assistants to multi-agent orchestration, every agent in a workflow needs access to the same semantic definitions. Without shared grounding, sub-agents return results using metric logic that contradicts what the orchestrator expects. The agentic semantic layer exposes business logic to AI agents through a programmatic API designed for machine consumption, not just BI tool integration. This is the use case OSI was explicitly designed to support, and it is the architectural assumption baked into the new generation of agentic analytics platforms — including QuantumLayers’ QL-Agent, which orchestrates ingestion, querying, visualization, and reporting through a single prompt rather than asking the user to redefine business logic at each step.

What to do if you are starting from zero

If your team does not have a semantic layer today and you are wondering where to start, the answer depends less on technology and more on what you have already built.

If you are heavily invested in dbt for transformations, the dbt Semantic Layer is the path of least resistance. Start by defining your three to five most disputed metrics — the ones that come up in every meeting — in MetricFlow, and route at least one downstream consumer through them.

If you are committed to a single warehouse and a single BI tool, the warehouse-native option (Snowflake Semantic Views, Databricks Metric Views) minimizes operational overhead at the cost of portability. This is a reasonable choice if you are confident the commitment will hold.

If you are building custom analytics applications or operating a multi-tool environment, a headless option like Cube gives you the flexibility to expose metrics consistently across consumers that have nothing else in common.

In all three cases, do not try to model every metric in your business at once. Start with the small set of metrics that already cause the most consistency problems — revenue, active users, conversion rate, whatever your version of those is — and expand from there. Semantic layer projects that try to be complete from day one tend to stall before producing any consuming application, which is the only thing that actually creates organizational pull for the discipline.

The bottom line

The semantic layer is no longer an architectural curiosity. It is becoming the core of how modern data teams handle the gap between raw data and the people, applications, and AI systems that consume it. The standardization push behind OSI, the convergence of vendors that previously competed on incompatible models, and the operational pressure created by AI agents that need governed context have all aligned to push the category into the mainstream within a remarkably short window.

For data teams, the practical implication is that “we will sort out metric definitions later” is no longer a credible plan. The cost of inconsistency compounds with every new dashboard, every new analyst, and every new AI system that reads from the warehouse. The teams investing in semantic infrastructure today are not solving a 2026 problem. They are paying down debt that would have made every analytics initiative for the next five years more expensive.

Lurika is an independent publication covering data analytics for non-technical teams. We are not owned by any analytics vendor.