Data Contracts: How Data Teams Are Stopping Schema Changes from Breaking Production

Last updated: May 2026

A backend engineer renames a column in a production database. They are following a perfectly reasonable refactor. The column has been there for three years, the new name is clearer, and the migration script handles the rename cleanly. Their service tests pass. The deploy goes out on a Tuesday afternoon.

By Wednesday morning, the marketing dashboard is empty. The finance reconciliation job has crashed. Three downstream dbt models are flagging errors that nobody on the data team can immediately diagnose. The exec presentation scheduled for Thursday is in jeopardy because nobody can confirm whether last quarter’s revenue figure is still correct.

Nobody did anything wrong. The engineer had no way of knowing that six analytics pipelines, two reverse ETL syncs, and a customer-facing reporting feature all depended on that specific column name. The data team had no way of seeing the change before it shipped. The breakage happened in the seam between two systems that had no formal agreement about how they were supposed to interact.

Data contracts exist to close that seam. After several years of conference talks and theoretical debate, the category has matured into something data teams can actually deploy. The standards have stabilized. The tooling has consolidated. And the operational pressure created by AI workloads, which require fresh and reliable data the moment a model goes to production, has turned data contracts from an aspirational idea into a line item on 2026 platform roadmaps.

What a data contract is, in concrete terms

A data contract is a formal agreement between the producer of a dataset and its consumers. It specifies what the data looks like, how it is supposed to behave, and what guarantees come with it. Schema, semantics, freshness, ownership, and quality rules are all encoded in a single artifact that lives in version control, gets reviewed in pull requests, and can be checked programmatically in CI/CD.

The minimum useful contract covers three things. First, the schema: column names, types, nullability, and primary keys. Second, the business logic: what each field actually means, what values are valid, and what relationships exist between fields. Third, the service-level expectations: how often the data refreshes, how stale it is allowed to get before it counts as broken, and who gets paged when it does break.

Chad Sanderson, who has done more than anyone to popularize the modern data contract pattern, describes the model directly: contracts are APIs for data, treated with the same rigor that backend teams treat their service APIs. The producer commits to maintaining a stable interface. The consumer commits to building against that interface and not the underlying implementation. Breaking changes go through a deprecation cycle. The whole thing is enforced by tests, not by goodwill.

The phrasing matters because it reframes the problem. Without a contract, the implicit agreement between the engineer who owns the production database and the analyst who builds dashboards on top of it is “do not change anything that I happen to be relying on, even though I have not told you what I am relying on.” That is not an agreement. It is a trap. Contracts replace it with an explicit interface that both sides can reason about.

Why the category got serious in 2025 and 2026

Data contracts have been discussed since at least 2021, when Andrew Jones documented the original GoCardless implementation. What changed recently is that the conditions for adoption finally aligned.

The first shift was the publication of an open standard. The Open Data Contract Standard (ODCS), maintained by the Bitol project under the Linux Foundation AI and Data umbrella, reached version 3.1.0 in December 2025. The standard is YAML-based, Apache 2.0 licensed, and supported by a growing list of vendors. It covers schema, data quality rules, SLAs, stakeholders, and pricing in a single specification, with machine-readable JSON Schema validation that lets contracts be checked automatically at write time. Before ODCS, every data contract implementation was custom-built. After ODCS, teams have a portable format that travels between tools.

The second shift was AI workloads moving into production. When the data layer was feeding monthly reports, a broken pipeline was an inconvenience. When it is feeding a customer-facing AI feature that responds in real time, a broken pipeline is a customer-facing incident. Industry research from 2026 reports that 56 percent of data professionals now cite poor data quality as their primary challenge, a substantial increase over prior years, and the rise tracks closely with the move from analytical AI experiments to AI in production.

The third shift was convergence with adjacent infrastructure. Schema registries, data catalogs, semantic layers, and observability platforms all matured to the point where they could host or consume contract definitions. Many of the same vendors involved in the Open Semantic Interchange specification published in early 2026 are also active in the data contract conversation, and the two specifications are increasingly designed to work together.

The two architectural patterns that actually ship

Most working implementations fall into one of two patterns.

In the producer-defined pattern, the contract lives next to the code that generates the data. A backend engineer writing a service that emits an OrderPlaced event also writes a contract specifying what fields the event contains, what they mean, and what guarantees come with them. The contract is reviewed in the same pull request as the service code. CI/CD validates that new code still emits data conforming to the contract, and a schema registry like Confluent Schema Registry or AWS Glue Schema Registry rejects events at runtime that fail to match. This is the cleanest approach when the producing team has the engineering bandwidth to participate, but it requires producers to treat downstream analytics consumers as legitimate customers. In organizations where data is still treated as exhaust from production systems, the producer-defined pattern will not stick.

In the consumer-driven pattern, the contract lives at the boundary between raw ingested data and the curated tables downstream applications actually use. Raw data lands in the warehouse through Fivetran, Airbyte, or a custom pipeline. A dbt model transforms it into a curated table that has a contract attached to it. Tests run during the dbt build to verify the contract holds. If a producer ships an upstream change that breaks the curated table, the build fails before any downstream consumer sees the problem. This pattern is more common today because the data team can ship contracts unilaterally. The trade-off is that breakage detection is reactive. The producer still ships the breaking change. The data team still has to fix it. The contract just moves the failure from “broken dashboard at 9 AM Thursday” to “failed build at 2 AM Wednesday,” which is a meaningful improvement but not the full vision.

Most mature data organizations end up running both patterns simultaneously. Producer-defined contracts cover the highest-impact upstream sources where engineering has bought in. Consumer-driven contracts cover everything else.

What a contract actually looks like

A contract for an orders table includes a schema section with columns, types, and constraints (order_id is a UUID string, primary key, not nullable; order_total_cents is an integer that must be greater than or equal to zero). A semantics section explains what the table represents (an orders row is created when a customer completes checkout; refunds do not delete the row, they update a separate order_status field). A data quality section encodes rules that should hold continuously (the daily row count should be within three standard deviations of the trailing 30-day average; no more than 0.1 percent of rows may have a null customer_id). An SLA section specifies operational guarantees (refresh every 15 minutes during business hours; data older than two hours is stale). An ownership section names the producing team, the consuming teams, and the escalation paths.

That is the entire contract. Human-readable, machine-checkable, version-controlled. Every downstream tool that needs to know what the orders table is can read the same source.

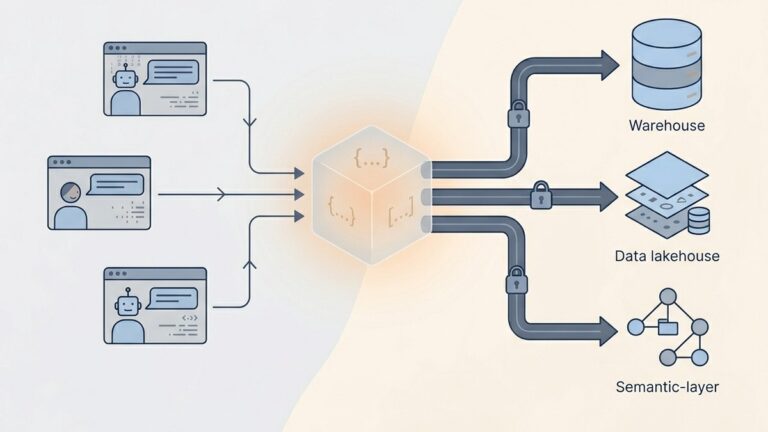

How contracts intersect with the rest of the stack

A contract by itself does nothing. What makes contracts useful is the surrounding tooling that reads, validates, and reacts to them.

Transformation tools consume contracts as inputs. dbt’s model contracts feature lets a model declare the schema it commits to producing and fails the build if the output drifts. dbt Labs has been explicit about positioning the semantic layer as the governance layer for AI, and contracts at the model level are the foundation that the semantic layer sits on top of.

Observability platforms validate contracts continuously. Monte Carlo, Bigeye, and the open-source Soda framework all read contract definitions and run automated checks that compare actual data behavior to contractual guarantees. The result is alerting that fires the moment a contract is violated, with the contract itself providing the context for what “violated” means. Without contracts, observability tools have to infer what is normal from historical patterns, which works but produces false positives. With contracts, the tool is checking against an explicit specification.

AI and analytics platforms use contracts as the trust layer. When an LLM-driven analytics tool generates a query against the warehouse, the contract is what tells it which tables are stable, what the columns mean, and what filters need to be applied to get correct results. Platforms that prioritize statistical rigor in AI-driven analysis, like QuantumLayers, depend on this kind of governed input. The platform connects to multi-source data and runs automated statistical tests before passing results to a language model, which is why it treats statistical preprocessing as a precondition for reliable AI insights rather than something to bolt on afterwards. A statistical test on top of badly defined data is just a more expensive way to be wrong, and contracts are how the data gets defined before the analysis starts.

Catalogs and discovery tools index contracts as canonical metadata. Atlan, Alation, and DataHub all treat contracts as first-class objects in their data inventory, which means a developer searching for “what is the orders table” gets the contract as the answer rather than a stale wiki page.

The mistakes that derail data contract initiatives

Data contract projects fail more often than they succeed. The pattern of failure is usually one of the following.

Trying to put everything under contract on day one. A team announces a data contracts initiative, decides every table in the warehouse needs coverage, and burns six months producing YAML files that nobody is using. The right starting set is small. Pick the three to five tables that produce the most incidents, are referenced by the most downstream consumers, or block the most high-stakes decisions. Get those under contract. Ship something that catches a real breakage in production. Use the win to fund expansion.

Confusing the contract with the documentation. A contract is not a markdown file in Confluence describing the table. It is a machine-readable specification that gets enforced. If the contract is not running in CI/CD or being checked by an observability tool, it is documentation, and documentation drifts from reality the moment it is written. The line between contract and doc is enforcement.

Letting producers off the hook. Consumer-driven contracts are useful, but if the only contracts in the organization are owned by the data team, the producer-side incentive to avoid breaking changes never forms. Over time, the data team becomes a complaint department that catches breakages after the fact. The contract has to create accountability on the producing side, which means producers need to know when their change broke a contract and feel some operational pain when it happens.

Skipping the data quality dimension. A contract that only specifies schema misses the most common failure mode in modern data platforms, which is not “the column disappeared” but “the column is still there and now contains garbage.” Quality rules around freshness, completeness, and value distributions are what catches the silent failures. ODCS makes these first-class fields in the specification for a reason.

Building enforcement without escalation paths. A contract that fires an alert into a Slack channel nobody monitors is worse than no contract, because it generates the impression of safety without actually providing it. Every contract needs an owner, an on-call rotation, and an SLA on response time. If those do not exist, the contract is performative.

Where to start if you are starting now

Three steps cover most of the value for a team that does not have data contracts today.

First, identify the most painful recurring incident pattern in your warehouse. For most teams, this is either upstream schema changes that break dbt models or freshness violations that go undetected until a stakeholder complains. Whichever one you pick, it is the seed for your first contract.

Second, write the contract for the relevant table using the ODCS YAML format. The specification is reader-friendly, the examples are concrete, and the format is supported by a growing list of tooling. Even without enforcement on day one, having the contract in version control creates a shared reference point.

Third, pick one enforcement layer and wire it up. The lightest option is dbt model contracts, which require almost no new infrastructure if you already use dbt. The next option is a data quality framework like Soda or Great Expectations that reads the contract and runs continuous checks. The heaviest option is full schema registry integration at the producer level, which is appropriate only if the producing team is on board.

The point of starting narrow is not to stay narrow. It is to build operational muscle on a small surface before scaling to the full warehouse. Teams that try to cover everything immediately produce contracts that nobody enforces. Teams that start with one painful table and expand from there end the year with twenty contracts that all matter.

The bottom line

Data contracts are not a 2026 invention. The pattern has been working in pockets of the industry for several years. What is new is that the standards have stabilized, the tooling has matured, and the operational pressure from AI workloads has made the cost of inconsistent data impossible to ignore. The teams that invest in contracts this year are not solving an academic problem. They are paying down debt that compounds with every new dashboard, every new model, and every new AI feature shipped on top of the warehouse.

The teams that keep treating data quality as a post-hoc cleanup job are going to find that the cleanup gets more expensive as the surface grows. The ones that move the agreement to the front, encode it in code, and check it in CI/CD will spend less time firefighting and more time on the analyses that actually move the business.

Lurika is an independent publication covering data analytics. We are not owned by any analytics vendor. Some articles contain affiliate links that help fund our work at no cost to you and never influence our recommendations.