Best Data Observability Platforms for Data Teams in 2026

Last updated: May 2026

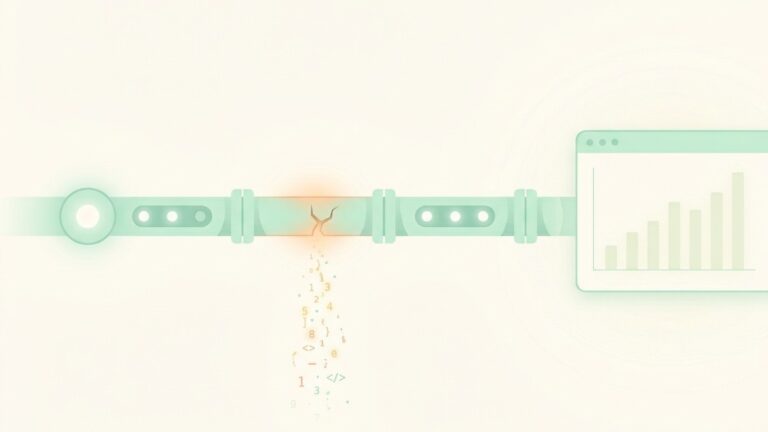

A data engineer at a mid-sized SaaS company wakes up to a Slack message from the CFO. The revenue dashboard is showing a 40 percent drop overnight. Trading has been halted on three internal forecasts. Engineering scrambles to figure out what broke. Three hours later, the answer arrives: a schema change in an upstream Salesforce object renamed a field, the dbt model silently filtered out the new name, and the dashboard quietly stopped counting half the pipeline. The data was wrong for nine days before anyone caught it.

Stories like this are why data observability went from niche to necessary. As warehouses like Snowflake, BigQuery, and Databricks have become the operational backbone of analytics, AI, and revenue reporting, the cost of broken data has grown with them. A bad join no longer just means a wrong chart in a board deck. It can mean a misfired marketing campaign, an inaccurate model prediction, or a regulator asking questions.

Data observability platforms exist to catch these issues before they hit production dashboards or AI pipelines. They watch your data the way APM tools watch your services: monitoring freshness, volume, schema, distribution, and lineage, then alerting the right people when something looks off.

The category has grown crowded, and the vendors no longer all look the same. Some are pipeline-first, some are catalog-first, some are open source, some are tightly coupled to dbt. We evaluated the platforms that data teams shortlist most often in 2026, looking specifically at coverage of the modern stack, automation depth, lineage quality, alert signal-to-noise, and how well each fits different team structures.

Here is the rundown.

1. Monte Carlo

Best for: Enterprise data teams that want broad, ML-driven coverage across the entire stack.

Website: montecarlodata.com

Monte Carlo is the platform that named the category. The company coined the term “data reliability engineer” and remains the default reference point when teams talk about data observability. Coverage spans warehouses, lakes, ETL tools, BI platforms, and increasingly AI pipelines, with field-level lineage that engineers consistently call out as one of the platform’s strengths.

Setup is genuinely fast for an enterprise tool. Out-of-the-box monitors cover freshness, volume, and schema changes across all connected tables without manual configuration. ML-powered anomaly detection learns normal patterns over a few days, then flags statistical drift, distribution shifts, and unusual row counts. The 2025 push into AI observability added agent monitoring and model output tracking, which matters for teams pushing into production AI workflows.

The trade-off is pricing. Monte Carlo uses a credit-based, consumption model and does not publish list pricing. Annual contracts for mid-sized deployments commonly land in the $25K to $50K range, scaling well into six figures for larger estates. The platform is purpose-built for serious data infrastructure, not a quick experiment.

Best suited for: Mid-to-large data teams with established cloud warehouses, organizations running production AI or ML workloads, and teams where data downtime has measurable revenue or compliance impact.

Limitations: Pricing is opaque and scales with usage, so forecasting cost is harder than with flat-rate tools. Some integrations (Airflow, dbt) display trace and lineage data that users report as occasionally inaccurate. The platform is overkill for teams with fewer than a few dozen meaningful tables.

2. Bigeye

Best for: Mid-market and enterprise teams that want fast deployment and minimal configuration.

Website: bigeye.com

Bigeye built its reputation on time-to-value. The platform auto-profiles connected data sources, generates baseline metrics, and starts monitoring without requiring engineers to write rules first. For teams that have tried open-source quality testing and stalled on the maintenance overhead, this is the appeal: monitoring shows up automatically, and you tune from there.

The interface is one of the more accessible in the category, which matters when data observability needs buy-in from analysts, analytics engineers, and stakeholders who are not on the platform team. Lineage is solid, integrations cover the major warehouses and orchestrators, and SLA tracking gives data leaders something concrete to report up to executives.

Bigeye has leaned into the idea of “data reliability as a product,” which translates to features around ownership, on-call rotations, and incident workflows that look familiar to anyone coming from a software observability background.

Best suited for: Data platform teams that want production-grade observability without a multi-quarter rollout, organizations with mixed warehouse and legacy environments, and teams where fast onboarding matters more than deep customization.

Limitations: Pricing is enterprise-tier and not transparent. Custom rule logic is less flexible than what you can write in code-based tools like Soda or Great Expectations. Coverage outside the modern data stack (mainframes, niche on-prem systems) is thinner than larger platforms.

3. Acceldata

Best for: Large enterprises with hybrid environments and infrastructure-level observability needs.

Website: acceldata.io

Acceldata takes a broader view than most of its competitors. Where Monte Carlo and Bigeye focus tightly on data quality and pipeline health, Acceldata combines those with compute and infrastructure observability. You get visibility into not just whether your data is correct, but whether your Snowflake spend is reasonable, whether your Spark jobs are performing, and where compute is being wasted.

This makes Acceldata particularly relevant for organizations running complex hybrid architectures: a Snowflake warehouse on one side, on-prem Hadoop or Cloudera on the other, with Databricks in the middle. The platform handles all of these natively, which is rare in a category where most vendors assume a clean cloud-only setup.

The downside of breadth is depth in any single area. Teams whose primary need is ML-driven anomaly detection across a dbt-modeled warehouse will sometimes find Acceldata’s data quality features less polished than Monte Carlo or Bigeye. The platform shines when “data observability” actually means “the entire data infrastructure stack.”

Best suited for: Enterprises with hybrid cloud and on-prem data estates, FinOps-conscious organizations watching warehouse spend, and teams whose data reliability concerns include compute performance.

Limitations: Implementation is heavier than pure data quality tools. Pricing is enterprise-only, with no self-serve or trial path. Smaller teams or modern-stack-only shops will not use most of the platform.

4. Datafold

Best for: Engineering teams that want to catch data quality issues during code review, not after deployment.

Website: datafold.com

Datafold flips the data observability model. Instead of waiting for bad data to appear in production and then alerting, Datafold focuses on preventing the bad data from being deployed in the first place. The headline feature is data diff: when a developer opens a pull request that changes a SQL model or dbt transformation, Datafold compares the output of the modified code against the existing version row by row and surfaces the impact in the PR itself.

For teams running mature dbt or SQLMesh workflows, this changes how data engineering operates. Schema changes, regression bugs, and unintended row count shifts get caught at code review, the same way software engineers catch bugs through CI. The platform also offers traditional monitoring and lineage, but the diff and CI integration is what makes it distinctive.

Datafold has invested heavily in AI features, including agents that automate migration work (for example, translating Stored Procedures from Teradata to Snowflake) and recommend fixes based on lineage and historical issues.

Best suited for: Teams with strong analytics engineering practices, organizations running dbt or SQLMesh, and data platforms where pull request workflows are already standard.

Limitations: Less useful for teams that have not adopted code-based transformation. The pre-deployment focus means it is a complement to, not a replacement for, runtime monitoring on production pipelines. Pricing is mid-market and up.

5. Soda

Best for: Teams that want data quality as code, with both open-source and managed options.

Website: soda.io

Soda occupies a useful middle ground. Soda Core is the open-source library that lets engineers define data quality checks in YAML and run them inside pipelines or CI/CD. Soda Cloud builds a managed observability layer on top, adding monitoring dashboards, alerting, lineage, and collaboration features for non-engineering stakeholders.

The “checks as code” philosophy is the draw. Every check lives in version control, which means quality logic is reviewable, testable, and reproducible across environments. For data engineering teams that already operate with software engineering rigor, this fits the existing workflow far better than configuring monitors through a vendor UI.

Soda has expanded its AI agent capabilities meaningfully through 2025 and 2026, with features that suggest checks based on observed data patterns and help triage incidents. The trade-off remains the same as with all “as code” approaches: someone has to write and maintain the rules. The platform does not blanket your warehouse with automatic monitoring the way Bigeye or Monte Carlo do.

Best suited for: Engineering-led data teams, organizations with strong CI/CD discipline, and platforms where quality logic needs to live in the same repository as transformation code.

Limitations: Higher upfront engineering investment. Less out-of-the-box automation than ML-first tools. Soda Cloud pricing is enterprise-tier; Soda Core is free but self-managed.

6. Sifflet

Best for: Teams where business users need visibility into data reliability, not just engineers.

Website: siffletdata.com

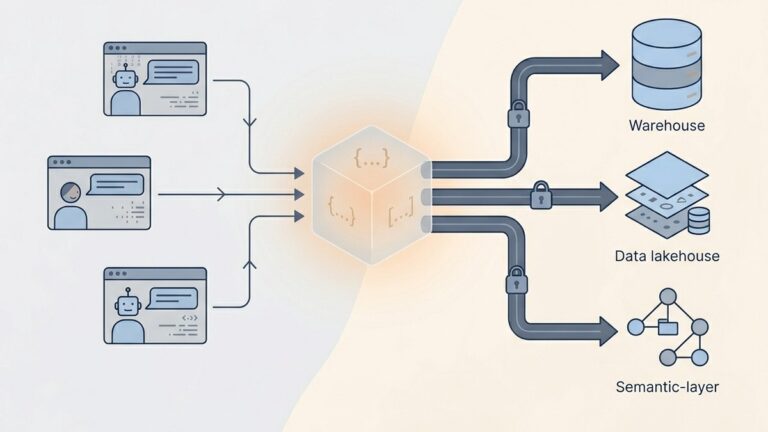

Sifflet leans into a use case most observability tools quietly ignore: making data health legible to non-engineers. The platform places monitors and alerts inside a data catalog, so when an analyst or stakeholder browses a table to use it for reporting, they see freshness, recent issues, and ownership in the same view.

This catalog-centric approach pairs well with field-level lineage that traces issues both upstream (where did this break?) and downstream (which dashboards and KPIs are affected?). The impact analysis is presented in business terms rather than purely technical ones, which makes it easier for data leaders to communicate with the rest of the organization when something goes wrong.

Sifflet’s auto-coverage feature uses usage signals to prioritize monitoring on the data that actually matters to the business, rather than blanketing every table equally. For teams that have been overwhelmed by alert noise from broader platforms, this targeted approach can be a meaningful improvement.

Best suited for: Organizations with embedded analytics or data mesh architectures, teams where business users self-serve from the warehouse, and companies wanting to extend trust signals beyond the engineering team.

Limitations: Smaller community and ecosystem than Monte Carlo. The catalog-first model assumes you want to consolidate observability and discovery, which not every team does. Less suited for teams that primarily need pipeline-level alerting only.

7. Elementary

Best for: dbt-native teams that want observability built into their existing transformation workflow.

Website: elementary-data.com

Elementary started as an open-source dbt package and grew into a full observability platform built around the dbt graph. If your team already lives in dbt, the integration is unusually deep: tests, models, exposures, and run results feed directly into the observability layer without separate ingestion or configuration.

The open-source version is genuinely useful and free, which makes Elementary a common starting point for analytics engineering teams that want monitoring without a procurement cycle. Elementary Cloud adds the managed layer: automated anomaly detection, column-level lineage, alerting integrations, and a UI built for triage and collaboration.

What distinguishes Elementary from broader platforms is its opinionated focus on the transformation layer. It assumes dbt or SQLMesh is the source of truth for your data models, and it builds monitoring, lineage, and incident response around that assumption. For teams operating that way, the result feels native rather than bolted on.

Best suited for: dbt-heavy analytics engineering teams, organizations standardized on the modern data stack, and teams that want to start with open source and grow into managed.

Limitations: Coverage outside dbt-modeled data is thinner. Less useful for organizations using GUI-based ETL tools or non-dbt transformation. The open-source version requires self-hosting and ongoing maintenance.

How to Choose: A Decision Framework

The right platform depends less on feature lists and more on three factors: how your team works, where your data lives, and what failure mode hurts most.

If you need broad coverage across a complex enterprise stack and have the budget to match, Monte Carlo is the most established choice. The platform handles the widest range of integrations and offers the deepest ML-driven automation in the category.

If you want fast time-to-value and lower configuration overhead, Bigeye is built for that. Auto-profiling and automatic monitor generation reduce the upfront investment significantly.

If your concerns extend beyond data quality to compute and infrastructure, Acceldata is the only platform on this list that treats those as a unified problem. Hybrid cloud and on-prem environments are where it pulls ahead.

If your team operates with software engineering discipline and wants to catch issues before deployment, Datafold changes the workflow rather than just monitoring it. Data diff in pull requests is genuinely differentiating.

If you want quality checks defined as code and version controlled alongside your transformations, Soda is the strongest fit. The open-source core makes it accessible to start with.

If non-technical stakeholders need to trust the data themselves, Sifflet brings observability into the catalog where they already browse.

If your stack is dbt-first and you want observability that feels native, Elementary is built specifically for that path.

Quick Comparison Table

| Tool | Pricing Model | Best Stack Fit | Setup Time | Key Differentiator |

|---|---|---|---|---|

| Monte Carlo | Credit-based, custom | Enterprise, multi-source | Days | Broadest coverage, ML monitors, AI observability |

| Bigeye | Custom enterprise | Modern + legacy | Hours to days | Auto-profiling, fast time-to-value |

| Acceldata | Custom enterprise | Hybrid cloud + on-prem | Weeks | Data + compute + infrastructure observability |

| Datafold | Mid-market and up | dbt / SQLMesh teams | Days | Data diff in pull requests, AI migration agents |

| Soda | Free OSS / paid Cloud | CI/CD-mature teams | Days | Quality checks as code |

| Sifflet | Custom | Catalog-centric orgs | Days | Catalog-integrated reliability for business users |

| Elementary | Free OSS / paid Cloud | dbt-native teams | Hours | Native dbt observability |

What Has Changed in 2026

A few shifts are worth flagging if you are evaluating now rather than a year ago.

The boundary between data observability and AI observability is collapsing. Monte Carlo, Datafold, and Soda have all extended monitoring into model inputs, retrieval quality for RAG systems, and agent behavior in production. If your roadmap includes AI workloads, prioritize platforms that have credible coverage there, not just dashboards.

Datadog acquired Metaplane in April 2025 and is porting the platform onto Datadog’s infrastructure. This signals broader consolidation between application and data observability, and it means teams currently on Metaplane should track the migration timeline closely.

Warehouse-native data quality features are improving. Snowflake, Databricks, and BigQuery have all added quality and monitoring capabilities directly. None of them yet match dedicated platforms for multi-source workflows or sophisticated lineage, but the gap is narrowing for teams running entirely inside one warehouse.

Final Thought

Data observability is no longer a category that needs justifying to executives. The cost of broken data is concrete, and the tools to prevent it have matured into something a serious data team can rely on. The harder question is which philosophy fits your organization.

Pipeline-first platforms like Monte Carlo and Bigeye solve a different problem than code-first tools like Soda and Datafold. Catalog-centric platforms like Sifflet aim at a different audience than dbt-native ones like Elementary. The right answer is rarely the most popular tool. It is the one whose model matches how your team actually builds, ships, and consumes data.

Most platforms on this list offer free trials, free tiers, or open-source starting points. There is little reason not to test two or three side by side against a real failure scenario from the last quarter, then commit based on what actually catches the issue, not on the demo.

Lurika independently evaluates data tools. Some links in this article may be affiliate links, and we may earn a commission if you sign up through them, at no extra cost to you. This does not influence our editorial recommendations. We only recommend tools we would genuinely suggest to a colleague.