Iceberg vs Delta Lake: How to Choose a Table Format Without Betting Wrong

Last updated: May 2026

A data engineering team is six months into building out a new lakehouse on S3. The ingestion pipelines are in place, the first transformations are running on Spark, and the first dashboards are connecting through Trino. Then a question lands in the team’s Slack: the analytics team wants to query the same tables from Snowflake, the ML team is standardizing on Polars, and the finance organization is asking whether everything could eventually move under Unity Catalog. And by the way, would the team mind documenting why they picked Delta over Iceberg in the first place?

Nobody picked. Spark defaulted to Delta because that is what Spark does, and a year of pipelines later, the choice is now infrastructure. Reversing it costs months. Living with it might cost more.

This is the position a lot of data teams are in. The “table format war” is the most discussed and least clearly resolved decision in the modern data stack, and most of what is written about it is either vendor-flavored or two years out of date. The landscape in 2026 is genuinely different from the landscape in 2023, and the right answer for a new platform is not the same as the right answer for an existing one.

This guide breaks down what each format actually is, where the real differences sit now that both projects have matured and partially converged, and how to make a choice that holds up under the next refactor.

What a table format actually does

Before getting into the comparison, it is worth nailing down what these projects are and are not. Iceberg and Delta Lake are not file formats. The data files are still Parquet in both cases. They are not query engines, they are not catalogs in the traditional sense, and they are not data warehouses.

A table format is the metadata layer that sits between your Parquet files and the engines reading them. Without one, a “table” in object storage is just a folder full of files, and any operation more complex than SELECT * runs into problems: you cannot atomically replace a partition, you cannot roll back a bad write, schema changes break everything downstream, and listing files at scale on S3 is painfully slow.

Both Iceberg and Delta Lake solve this with the same general approach: track every change to a table in a metadata file alongside the data, so engines can see a transactionally consistent view of which files belong to which table at which version. Both give you ACID transactions, time travel, schema evolution, and the ability to skip files based on metadata statistics rather than scanning everything. As the team at PuppyGraph noted in their comparison, the projects increasingly look alike at the conceptual level.

The differences are in the details, and the details are where you will spend the next three years of operational pain or operational ease.

Where each format came from (and why it still matters)

Origin stories tend to get framed as trivia, but in this case they shape the products in ways you will feel in production.

Apache Iceberg started at Netflix in 2017, where Ryan Blue and Daniel Weeks needed a replacement for Hive tables that could handle petabyte-scale datasets without falling over on the metadata layer. Hive’s approach of listing files on the filesystem and tracking partitions in a metastore was breaking down: directory listings on S3 were slow, partition counts hit limits, and concurrent writes were unsafe. Iceberg was designed from the start to be engine-agnostic. Netflix had a heterogeneous stack and needed any engine to be able to read and write the same tables, so the format had to belong to the data, not to a specific compute engine.

Delta Lake came out of Databricks the same year, but with a different priority order. Databricks’s customers were running Spark, and the immediate problem was bringing transactional reliability to data lake workloads on Spark. Delta got ACID transactions, schema enforcement, and time travel, all integrated tightly with the Spark runtime. The depth of that integration is still its biggest strength inside the Spark and Databricks ecosystem, and historically its biggest limitation outside of it.

Both formats moved into open governance. Iceberg is an Apache project. Delta was open-sourced under the Linux Foundation in 2019, and the codebase is genuinely open. The branding makes it look like Databricks’s product, and in practice Databricks drives most of the development, but the spec is public.

The ecosystem has shifted significantly in the last two years. In June 2024, Databricks announced its acquisition of Tabular, the company founded by Iceberg’s original creators. Reading the announcement carefully is worth the time. Databricks now employs the people who built both formats, and the company has publicly committed to converging them. That commitment changes the calculus of “which format do I bet on” considerably.

The four differences that actually matter

Most comparison articles produce a long checklist of features. In practice, a small number of differences drive almost every real decision.

1. Engine support and the multi-engine question

Iceberg was designed to be engine-agnostic, and the ecosystem reflects that. Production-grade support exists in Spark, Flink, Trino, Presto, Dremio, Snowflake, AWS Athena, BigQuery, and StarRocks. The Iceberg REST Catalog API is an open specification that any vendor can implement, and most of the ones that matter have. This is the main reason teams building deliberately multi-engine architectures lean toward Iceberg.

Delta Lake delivers its strongest performance and most complete feature set inside Spark and Databricks. Connectors exist for other engines, but historically the gap in feature parity and performance has been real. The recent Delta Connect initiative decouples Delta access from the Spark runtime and provides clients in Rust, Go, and other languages, which closes some of that gap. But the center of gravity remains Spark.

For a data team, the practical question is which engines you actually use, not which engines theoretically support the format. If your stack is Spark and Databricks all the way down, the multi-engine argument is hypothetical. If you are running Trino for interactive queries, Spark for batch, and want to expose tables to Snowflake or Athena, Iceberg’s broader support is a concrete advantage today.

2. Catalog architecture and governance

The catalog is where a lot of the operational pain lives, and it is where the two formats differ most.

Iceberg’s REST catalog specification has produced a healthy market of implementations: Apache Polaris (co-created by Snowflake and Dremio), AWS Glue, Project Nessie, and several commercial options. You can run multiple catalogs, switch between them, and avoid being locked into a single vendor’s metadata service. This is genuinely useful when organizational boundaries do not line up with technology choices, which they almost never do.

Delta Lake’s primary governance layer is Unity Catalog, which is owned and operated by Databricks. Unity Catalog provides a strong feature set, including lineage, fine-grained access control, and data sharing, but those advanced features are most complete inside Databricks. The Hive Metastore is supported as a fallback, but you give up a lot moving away from Unity. As of late 2025, Databricks has extended Unity Catalog to govern Iceberg tables alongside Delta Lake, which Capital One’s engineering team described as “catalog-centered design” replacing format-centered design as the main architectural decision.

If your organization treats data governance as a centralized, single-vendor concern, Unity Catalog is genuinely strong. If your governance has to span multiple platforms or you specifically want to avoid concentrating metadata control in one vendor, the open Iceberg catalog ecosystem is a better starting point.

3. Partition evolution and schema flexibility

Iceberg’s partition evolution is one of its more genuinely differentiated features. You can change how a table is partitioned, say, from daily to hourly, or by a different column entirely, without rewriting any existing data. New writes use the new scheme, old data keeps its original layout, and queries handle both transparently. This sounds like a niche feature until you spend a week rebuilding a multi-terabyte table because someone underestimated how much volume a partition would accumulate.

Delta Lake historically required a full table rewrite to change partition schemes, which made the decision feel permanent in a way it should not. Liquid Clustering, introduced in Delta Lake 3.0, addresses some of this by replacing static partitioning with a clustering approach that can adapt over time, and is the recommended path on Databricks for new tables. It is a different architectural answer to the same problem rather than a direct equivalent of partition evolution, but for many workloads the operational outcome is similar.

Both formats handle schema evolution (adding, dropping, renaming, reordering columns) with backward compatibility. The differences here are smaller than they used to be.

4. Convergence: UniForm, XTable, and the “write once, read anywhere” promise

This is the most important development for anyone making a decision in 2026, and it is the part that most older comparisons get wrong.

Delta Lake UniForm lets a Delta table automatically generate Iceberg-compatible metadata in parallel, so Iceberg clients (Snowflake, Trino, Athena, BigQuery) can read Delta tables without conversion, duplication, or rewriting any data. The Parquet files stay where they are; the table simply exposes two metadata interfaces over the same underlying storage. This is the practical answer to “what if I picked wrong” for a lot of Delta-based platforms.

The other side of the convergence story is Apache XTable (formerly OneTable), which provides translation between Hudi, Delta, and Iceberg metadata. It is co-developed by Microsoft, Google, and Onehouse, and it lets a single physical dataset be read as any of the three formats by different engines. XTable is more useful when you need true cross-format interoperability rather than the unidirectional Delta-as-Iceberg path UniForm provides.

The takeaway is that “Delta or Iceberg?” is increasingly the wrong frame. The right frame is which format you write in and which format(s) you expose for reads, with the understanding that those can now be different.

Performance: the conversation that wastes everyone’s time

Almost every benchmark you will see comparing these formats is somewhere between misleading and useless. Vendor benchmarks are designed to favor the vendor’s product. Independent benchmarks rarely match real workloads. And both formats have improved enough in the last 18 months that any benchmark older than 2025 is essentially historical.

A few honest observations from people who have run both in production:

Iceberg’s hierarchical metadata structure (manifest list → manifest files → data files) prunes irrelevant files efficiently before scanning, which scales predictably as tables grow into the billions of files. Delta’s transaction log can require more frequent checkpointing for very large or high-write-throughput tables, although recent versions have substantially improved checkpoint performance.

For most workloads at most scales, the format is not the bottleneck. As one practitioner blog put it bluntly, “the table format matters less than your table maintenance.” The teams that suffer performance problems are usually the ones that never run compaction on Iceberg or never run VACUUM on Delta. Operational discipline beats format choice by a wide margin.

If you are at a scale where you genuinely need to benchmark, benchmark with your data, your queries, and your engines. Treat published numbers as marketing material until proven otherwise.

What this means for analytics workflows

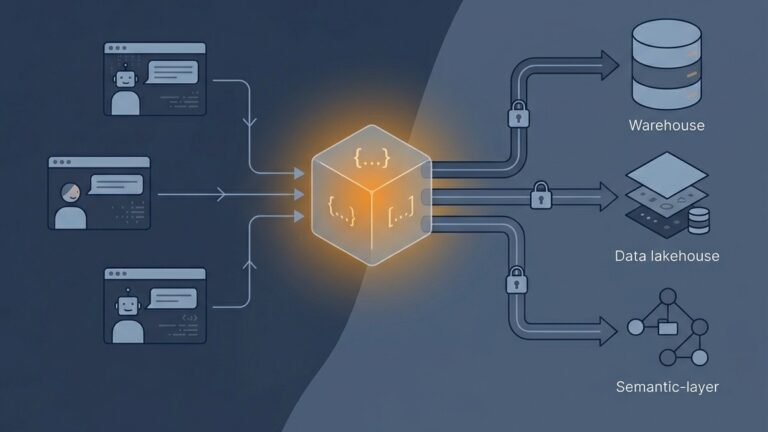

For data teams that consume lakehouse tables more than they produce them, the convergence story has a useful implication: the choice of table format at the storage layer increasingly does not have to dictate the choice of analytics tooling on top. A team can have its raw and curated layers stored in Delta with UniForm enabled, and analysts and downstream platforms can connect to those tables through Iceberg-compatible engines without anyone in the analytics layer needing to care about which format won the architectural debate underneath.

This decoupling matters because analytics tooling moves faster than storage. Modern analytics platforms increasingly connect to a wide range of warehouses and query engines on the read side, and platforms that take a no-code approach to data ingestion across SQL databases, REST APIs, and other sources can sit downstream of either format without forcing the underlying decision. The lakehouse table format is becoming an infrastructure detail, not a constraint on the analytics layer above it.

A decision framework that actually helps

Most “Iceberg vs Delta Lake” decision flowcharts ask the wrong questions. Here is what actually moves the needle.

If you are building greenfield, with no Spark or Databricks lock-in, pick Iceberg. The ecosystem is broader, the catalog landscape is healthier, and partition evolution is genuinely useful when requirements change, which they will. Multiple engines and multiple catalogs is the realistic future for most organizations of any size.

If you are already on Databricks, stay on Delta. The integration is excellent, Unity Catalog is a strong governance layer, and UniForm gives you the Iceberg interoperability story for downstream consumers. Migrating off Delta inside the Databricks ecosystem is solving a problem you do not have.

If you are already on Snowflake and adding lakehouse capability, lead with Iceberg. Snowflake’s Iceberg support is now a first-class citizen, and the catalog story (Polaris) aligns with the rest of your stack.

If you have parts of the organization on Databricks and parts on Snowflake, that is the case for UniForm. Write Delta in the Databricks-centric pipelines, expose Iceberg metadata, and let the Snowflake-side workloads read the same tables. This is what UniForm was built for.

If you are migrating from Hudi, target Iceberg. The tooling and engine support are broader, and the migration paths are better documented. With respect to the Hudi project, the mindshare and momentum have moved.

If you genuinely need format-agnostic storage, evaluate Apache XTable. This is a smaller, more specialized case, but it is real for organizations with substantial existing data in mixed formats.

The traps to avoid

A few specific patterns reliably go wrong, regardless of which format you pick.

Picking the format before picking the catalog. The catalog architecture often constrains the table format choice more than the other way around. If your governance requirements push you toward Unity Catalog, you are picking Delta whether you frame it that way or not. If they push you toward an open REST catalog, you are picking Iceberg.

Underestimating maintenance. Both formats accumulate small files, orphaned data, and metadata bloat. Both require routine compaction, snapshot expiration, and (for Delta) vacuum operations. Skipping these does not feel like a problem until query performance has degraded for six months and nobody knows why. Build the maintenance jobs into the platform from day one, not after the first incident.

Treating the format as untested infrastructure. Both Iceberg and Delta are mature enough for production at any reasonable scale. They are not where your platform’s risk lives. The risk lives in the data quality of what you write into them, the discipline of how you maintain them, and the clarity of the contracts with the teams that produce upstream data.

Reopening the decision every six months. The discourse churns. New benchmarks and new vendor announcements will keep landing. Unless something materially changes in your workload, in your catalog choice, or in the convergence trajectory, the right move is usually to invest in operational excellence on whatever format you picked rather than reopening the foundational decision.

The bottom line

Iceberg and Delta Lake have spent the last two years converging more than they have diverged. The Tabular acquisition put the original creators of both formats inside the same company, UniForm has solved the most common interoperability problem, and Apache XTable handles the cases UniForm does not. The format-agnostic future that everyone has been promising for years is now actually available in production.

For a new platform with no constraints, Iceberg is the safer default in 2026. For a Databricks-centric platform, Delta with UniForm gives you Iceberg interoperability without giving up the integration. For everyone in between, the right answer comes from your catalog and governance requirements, not from a benchmark.

The decision is real, but the cost of getting it slightly wrong is lower than it has ever been. What still costs you, regardless of format, is operational discipline: compaction, vacuum, schema management, and the basic table hygiene that no format can do for you. That is where the actual difference between a fast lakehouse and a slow one lives.

Lurika is an independent publication covering data analytics for non-technical teams. We are not owned by any analytics vendor.