Marketing Attribution: How to Figure Out What Is Actually Working

Last updated: April 2026

You are running ads on Google and Meta. You send a weekly newsletter. You post on Instagram and LinkedIn. You rank for a handful of organic keywords. A customer buys something, and four different platforms take credit for the sale.

This is the attribution problem, and it affects every business that markets through more than one channel. The question sounds simple: which of your marketing efforts actually drove that purchase? The answer, it turns out, is one of the hardest problems in business analytics, one that a $2.3 billion software industry has been built to solve and still has not solved cleanly.

For small teams, the situation is especially frustrating. Enterprise attribution platforms like Rockerbox, Northbeam, and Measured require significant investment, technical infrastructure, and data volumes that most businesses under 50 people simply do not have. Meanwhile, the default option (looking at whatever Google Analytics or your ad platform tells you) is actively misleading, because each platform measures in its own way, with its own biases, and each one has a financial incentive to claim credit for your conversions.

The result is that most small businesses make budget allocation decisions based on numbers that do not add up, in the most literal sense. If you total the conversions reported by every platform in your stack, the sum will almost always be higher than your actual sales. Everyone is taking credit. Nobody is telling the truth.

This guide explains how marketing attribution actually works, why the standard models fail for small teams, and what you can do instead to figure out where your marketing dollars are going and whether they are coming back.

What attribution models are and why they all lie a little

A marketing attribution model is a set of rules that assigns credit for a conversion (a sale, a signup, a lead) to the marketing touchpoints a customer interacted with before converting. The concept is straightforward. The execution is where it falls apart.

The simplest model is last-click attribution, which gives 100 percent of the credit to the final touchpoint before conversion. If a customer clicked a Google ad and then bought your product, Google Ads gets all the credit. This is the default in most analytics platforms, and according to Forrester Research, 67 percent of B2B marketing teams still rely on it in 2026.

Last-click attribution is popular because it is easy to implement and easy to explain. It is also profoundly misleading. Consider a realistic customer journey: someone reads a blog post on your site through organic search, sees a retargeting ad on Instagram three days later, clicks a promotional email the following week, and finally types your URL directly into their browser to make a purchase. Under last-click attribution, “direct traffic” gets 100 percent of the credit. The blog post that introduced your brand, the Instagram ad that kept you top of mind, and the email that delivered the offer all receive zero credit. If you make budget decisions based on this data, you will defund the very channels that are filling your funnel.

First-click attribution has the opposite problem: it credits the first touchpoint and ignores everything that happened afterward. Linear attribution splits credit evenly across all touchpoints, which sounds fair but treats a passing glance at a display ad the same as a detailed product comparison page. Time-decay attribution gives more credit to touchpoints closer to the conversion, which works better for short sales cycles but undervalues brand-building activities.

Multi-touch attribution (MTA) attempts to solve these problems by distributing credit across all touchpoints using statistical models or machine learning. It is the approach that attribution software vendors promote most aggressively, and in theory, it offers the most accurate picture. In practice, as Braze’s 2026 analysis of attribution challenges points out, MTA depends on being able to track and connect interactions across devices and sessions, and when identity is inconsistent or consent limits what can be tied together, multi-touch becomes patchy and still overvalues the touchpoints that are easiest to measure.

The IAB and BWG Global’s State of Data 2026 report found that three out of four marketers say their measurement approaches, including attribution, incrementality, and marketing mix modeling, are not delivering the speed, accuracy, or trust they need. The measurement industry itself acknowledges the problem. The question for small teams is what to do about it.

Why the privacy landscape makes this harder than it used to be

Five years ago, attribution was easier (if still imperfect) because cross-site tracking was more permissive. Third-party cookies allowed platforms to follow users across websites, building a reasonably complete picture of the customer journey. That era is ending.

Apple’s App Tracking Transparency framework requires apps to ask permission before tracking users across other apps and websites. Most users decline. Safari’s Intelligent Tracking Prevention and Firefox’s Enhanced Tracking Protection limit cookie lifespans and block cross-site tracking by default. Google Chrome has been phasing out third-party cookie support since 2024, though on a delayed timeline.

The practical impact for attribution is significant. When someone clicks your Instagram ad on their phone but does not grant tracking permission, that touchpoint can disappear from your attribution data entirely. When a prospect visits your site from a work laptop, reads a comparison article on their personal phone, and then converts on a tablet at home, connecting those three sessions to the same person is becoming technically difficult for all but the largest platforms with logged-in user bases.

For small businesses, this means that the data feeding your attribution models is increasingly incomplete. The touchpoints you can measure are not necessarily the touchpoints that matter most. And the attribution reports your ad platforms generate are optimistically biased, because each platform can only see its own contribution and has every incentive to claim credit.

This does not mean attribution is useless. It means that treating any single attribution report as ground truth is a mistake, and that small teams need a more practical approach.

The three measurement approaches that actually exist

The marketing measurement industry has consolidated around three complementary methodologies. Understanding what each one does (and does not do) helps you choose the right approach for your resources.

Multi-touch attribution (MTA) tracks individual user journeys to assign credit across touchpoints. It answers “which channels contributed to this conversion?” and is useful for tactical optimization within and across channels. Its weakness is that it cannot prove causation, only correlation, and it requires person-level tracking data that privacy changes are eroding. According to an EMARKETER and TransUnion survey, only 19.4 percent of US marketers rated MTA as the most reliable methodology in 2026.

Marketing mix modeling (MMM) uses aggregate, historical data to model how marketing inputs (spend by channel, promotions, pricing) drive business outcomes over time. It does not require user-level tracking, which makes it privacy-resilient. Modern MMM platforms operate on one-to-three month cycles rather than the annual cadence that was standard a decade ago. MMM was rated the most reliable methodology by 27.6 percent of US marketers in the same survey. Its weakness is that it requires significant historical data (often two or more years) and statistical expertise to build and validate models, making it impractical for most small teams to implement from scratch.

Incrementality testing uses controlled experiments to prove whether a specific campaign actually caused conversions that would not have happened otherwise. A common approach is a geo-holdout test: pause ads in one region, keep them running in a similar region, and measure the difference in outcomes. Incrementality provides the strongest evidence of causation, but it tests individual campaigns rather than your full marketing mix, and it requires enough scale to detect statistically significant differences. A Skai and Path to Purchase Institute report found that 44 percent of marketers question the reliability of incrementality results, partly because smaller businesses lack the volume needed for robust tests.

The industry consensus, articulated clearly in a 2026 Deducive guide to measurement frameworks, is that no single approach gives the full picture. Enterprise teams use all three in combination. Small teams need to pick the elements that are feasible at their scale.

A practical attribution framework for teams without a data engineer

If your team has fewer than 50 people and no dedicated data scientist, the enterprise measurement stack is out of reach. But that does not mean you are stuck with last-click attribution and gut instinct. The following framework uses a combination of simple techniques that, together, provide a substantially more accurate picture than any single default report.

Step 1: Centralize your data before you try to analyze it

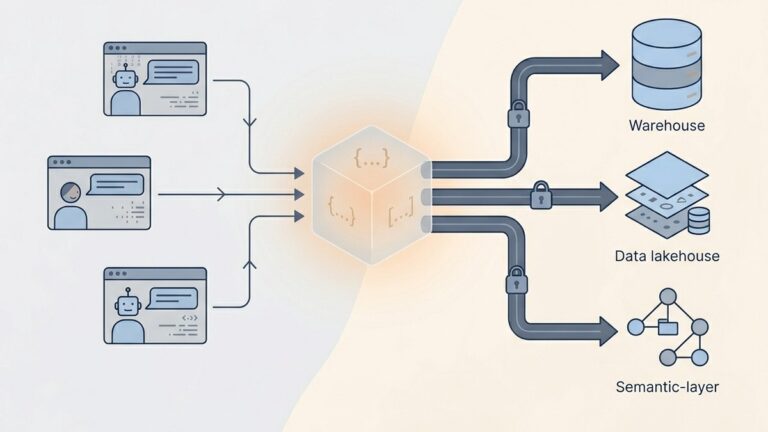

The first and most important step has nothing to do with attribution models. It is getting your marketing data, your sales data, and your customer data into the same place so you can compare them.

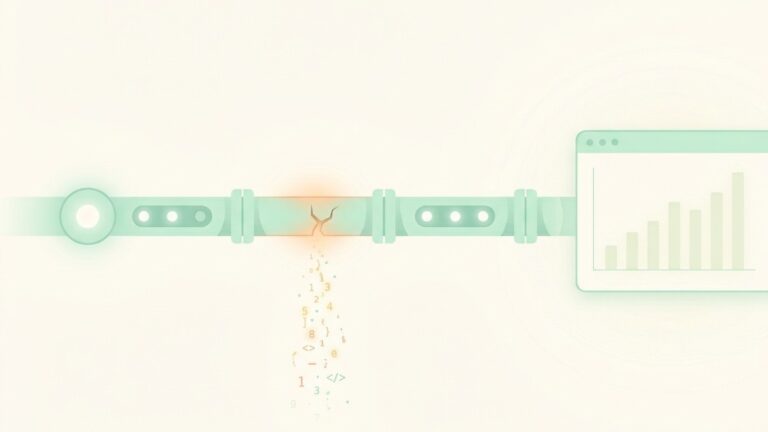

This is the problem that Lurika’s earlier guide on the data integration challenge explored in detail. Your Google Ads account reports conversions using its own tracking pixel. Your Meta Ads account does the same with its pixel. Your email platform counts conversions from email clicks. Your Shopify or WooCommerce store records actual orders. If you look at each system in isolation, the numbers will not reconcile.

The fix is to establish a single source of truth for conversions: your actual transaction data. Every other system’s conversion count is a claim; your order database is the fact. Platforms like QuantumLayers are designed to connect directly to multiple data sources (SQL databases, REST APIs, Google Sheets, CSV exports) and merge them automatically, handling the schema alignment and key matching that makes cross-source analysis possible without manual spreadsheet work. If you prefer a manual approach, a well-maintained spreadsheet that reconciles platform-reported conversions against actual orders on a weekly basis accomplishes the same thing, just with more effort.

Step 2: Use UTM parameters consistently and religiously

UTM parameters are tags you append to URLs to identify where traffic comes from. They are the single most important attribution tool for small teams, and they cost nothing to implement.

Every link you share, in an email, a social post, an ad, a guest article, a partner page, should include UTM tags that identify the source (google, facebook, newsletter), the medium (cpc, email, organic, social), and the campaign (spring-sale, product-launch, brand-awareness). Google’s Campaign URL Builder generates tagged URLs in seconds.

The key is consistency. If one team member tags Meta ads as source=facebook and another uses source=meta, your data fragments. If some emails are tagged and others are not, you get incomplete attribution. Create a shared UTM naming convention document, enforce it, and audit your tags monthly. This simple discipline produces better attribution data than most software can compensate for when the tagging is inconsistent.

Step 3: Run before-and-after channel tests

You do not need a formal incrementality testing platform to test whether a channel is working. You need the willingness to turn something off and see what happens.

Pick a channel you spend money on but are unsure about. Pause it for two to four weeks. Track your overall conversion volume and revenue during that period against a comparable baseline period. If nothing changes, the channel was not driving incremental results, regardless of what it reported. If conversions drop, you have evidence of genuine impact, and you can estimate the channel’s contribution by measuring the gap.

This is a simplified version of the geo-holdout methodology that enterprise teams use, and it has real limitations. Seasonality, competitive activity, and other variables can confound the results. The test only works if the channel you pause represents a meaningful share of your spend. And you need to be comfortable with the possibility of losing some revenue during the test period.

But even imperfect tests produce better information than no tests. A 2026 EMARKETER analysis documented a case where a brand paused Pinterest ads in select regions for 30 days and discovered the platform’s effectiveness was significantly higher than traditional attribution models had suggested. The test changed their budget allocation in a way that the attribution data alone never would have.

Step 4: Track cohort-level CLV by acquisition source

Attribution is not just about which channel gets credit for the initial conversion. It is about which channel produces customers who are actually valuable over time. A channel with a high cost per acquisition but a high customer lifetime value may be far more profitable than a cheap channel that produces one-time buyers.

This is where attribution intersects with the cohort analysis techniques covered in Lurika’s guide on tracking customer lifetime value. Tag your customers by their acquisition source (using the UTM data from step two), then track how much each source’s cohort spends over 30, 60, and 90 days. The patterns will almost certainly surprise you.

Industry data from McKinsey consistently finds that omnichannel customers, those who interact with a brand through multiple channels before purchasing, spend 30 to 40 percent more over their lifetime than single-channel customers. If your attribution model only measures the converting click and ignores the preceding touchpoints, you will undervalue the channels that create these higher-value, multi-touch customers.

Step 5: Triangulate, do not optimize for a single number

The most important principle in small-team attribution is to stop looking for one correct number and start triangulating from multiple imperfect signals.

Your Google Ads conversion count is one signal. Your GA4 attribution report is another. Your platform-reported email conversions are a third. Your actual order data reconciled against UTM tags is a fourth. Your channel pause test is a fifth. None of these is perfectly accurate. Together, they outline a picture that is close enough to make good decisions.

In practice, this means reviewing your marketing performance from at least two different angles each month. If Google Ads claims 200 conversions but your UTM-tagged GA4 data only attributes 140 to paid search, the truth is somewhere in between, and you should budget accordingly. If your email platform claims a 15 percent conversion rate on a campaign but your order data shows only a 9 percent lift during the campaign window, adjust your expectations.

The statistical preprocessing capabilities built into platforms like QuantumLayers can help automate parts of this triangulation by running correlation analysis and significance testing across your merged datasets, identifying which marketing variables are genuinely associated with revenue changes and which apparent patterns are just noise. But the core principle works even without specialized tools: compare multiple data sources, look for convergence, and be skeptical of any single platform’s self-reported numbers.

The metrics that matter more than attribution

While you are building a practical attribution framework, there are several metrics that provide a more reliable foundation for marketing decisions than any attribution model, precisely because they do not depend on tracking individual user journeys.

Blended customer acquisition cost (CAC). Total your marketing spend across all channels for a given period. Divide by the number of new customers acquired in that period. This number does not tell you which channel deserves credit, but it tells you whether your overall marketing is efficient. If blended CAC is rising quarter over quarter while your product and pricing have not changed, something in your channel mix is getting less efficient.

Marketing efficiency ratio (MER). Divide total revenue by total marketing spend. An MER of 5:1 means you generate $5 of revenue for every $1 of marketing spend. This is a blunt instrument, but it is hard to game. If you shift budget from one channel to another and MER improves, the reallocation was probably a good idea. If MER declines, it was probably not.

Revenue per visitor by source. For each traffic source (organic, paid search, social, email, direct, referral), divide the revenue attributed to that source by the number of visitors from that source. This gives you a rough proxy for traffic quality by channel. A source that sends low traffic but high revenue-per-visitor may deserve more investment, even if its volume metrics look unimpressive.

New-to-returning customer ratio by channel. Some channels are better at acquiring new customers; others are better at reactivating existing ones. Knowing this distinction prevents you from misjudging a channel’s value. Your email list is almost certainly reactivating existing customers, not acquiring new ones. If you attribute email revenue as “new customer acquisition,” you will overcount new customers and underfund actual acquisition channels.

Common attribution mistakes small teams make

Several patterns consistently lead small teams to misallocate their marketing budgets based on flawed attribution.

Trusting platform-reported conversions without reconciliation. Every ad platform over-reports conversions to some degree. Facebook counts view-through conversions (people who saw an ad but did not click, then converted later) in its default reporting. Google Ads counts conversions within its attribution window regardless of whether other channels were involved. If you add up every platform’s claimed conversions, the total will exceed your actual sales, sometimes by a wide margin. Always reconcile against your order data.

Defunding top-of-funnel channels based on last-click data. Brand awareness channels (content marketing, SEO, social media, podcast sponsorships) almost never get last-click credit because they operate early in the customer journey. If you cut them based on last-click attribution, you will see a delayed but real decline in the volume of customers entering your funnel. The decline shows up weeks or months later, making it hard to connect to the budget cut that caused it.

Confusing correlation with causation in channel performance. If your email campaigns coincide with seasonal demand spikes, the email will appear to “drive” revenue that would have occurred anyway. This is the same correlation-versus-causation trap described in Lurika’s guide on why most small businesses fail at analytics. The before-and-after channel test described above is the simplest way to test for genuine causation.

Ignoring offline and dark social touchpoints. A significant portion of customer influence happens in places you cannot track: word of mouth, private messages, group chats, podcast mentions, in-person conversations. A Braze analysis describes the modern customer journey as resembling a pinball machine rather than a funnel. If your attribution framework only accounts for trackable digital touchpoints, it systematically undervalues the untrackable channels that may be driving your most loyal customers.

Over-investing in attribution software before fixing data fundamentals. The global multi-touch attribution software market is projected to reach $2.3 billion in 2026, according to Persistence Market Research, and the same report notes that deployment costs and technical prerequisites pose significant adoption barriers for small and medium-sized businesses. Before spending on attribution tooling, make sure your UTM tagging is consistent, your conversion data is clean, and you have at least 12 months of historical data to analyze. Attribution software amplifies the quality of your underlying data; it does not compensate for bad data.

Where to start this week

If you are currently making budget decisions based on whatever your ad platforms tell you, here is a practical sequence for building a better attribution practice.

This week: Audit your UTM tagging across every active marketing channel. Create a shared naming convention document and fix any inconsistencies. This is free and takes a few hours.

Next week: Build a simple reconciliation spreadsheet (or connect your data sources to an analytics platform) that compares platform-reported conversions against actual orders for the last 90 days. Note the discrepancies. This is your baseline reality check.

This month: Pick one channel you are uncertain about and run a two-to-four-week pause test. Document the setup, the baseline period, and the results. Even if the results are ambiguous, you will learn something about your measurement gaps.

This quarter: Start tracking 60-day CLV by acquisition source. This shifts the attribution question from “which channel gets credit for the click?” to “which channel produces customers who are actually valuable?” The second question is harder to answer but far more useful for budget allocation.

Attribution will never be perfectly accurate, for any business at any scale. The goal is not precision. The goal is to be less wrong than you were yesterday, and to make budget decisions based on triangulated evidence rather than a single platform’s self-interested reporting.

We are not owned by any analytics vendor. Our reviews are based on hands-on testing and honest evaluation. Some articles contain affiliate links. These help fund our work at no cost to you and never influence our recommendations.