Data Observability in 2026: What Data Teams Are Actually Monitoring (and Why Dashboards Keep Lying)

Last updated: April 2026

A finance analyst pulls a quarterly revenue report on Monday morning. The number looks fine. Two days later, the head of FP&A asks why the report doesn’t match the close-of-quarter figure pulled by accounting. After three hours of digging, the data team finds it: a silent schema change in an upstream Salesforce object renamed closed_won_amount to amount_closed_won two weeks ago. The transformation job kept running. It just stopped seeing the column. Half of the deals quietly dropped out of the pipeline.

Nobody was alerted. Nothing was technically broken. Every job ran green. The dashboard rendered. The number was wrong.

This is the precise failure mode that data observability is designed to catch, and it’s why the category has become one of the most-searched topics for data teams in 2026. According to the 2026 State of Analytics Engineering Report from dbt Labs, poor data quality remains the most frequently reported obstacle across data organizations, and industry forecasts now project that roughly half of organizations with distributed data architectures will adopt advanced observability platforms by the end of 2026, up from less than 20 percent in 2024.

But “data observability” is also one of the most marketed, least understood terms in the modern stack. This guide breaks down what it actually means, what data teams are monitoring in practice, where the category is heading, and what to look for when you evaluate the tools.

What data observability actually is

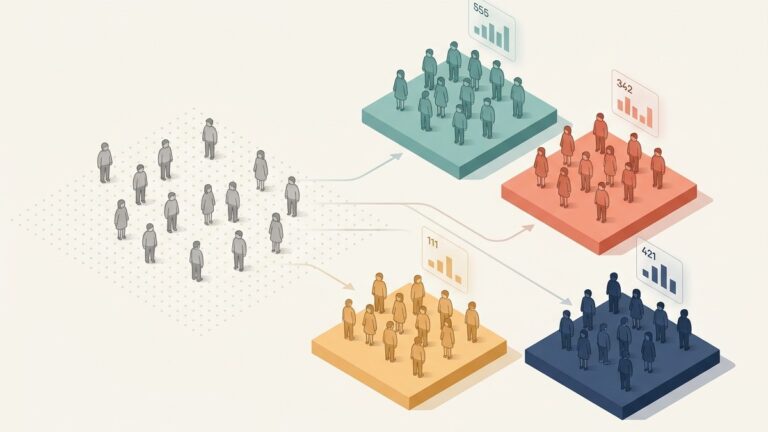

Data observability is the discipline of monitoring the health of data assets the same way DevOps teams monitor the health of services. The framework most practitioners reference is the five pillars model originally articulated by Barr Moses at Monte Carlo in her 2020 article introducing the category: freshness, volume, schema, distribution, and lineage.

- Freshness asks whether data is arriving on time. Is yesterday’s transaction data actually loaded? Is the dimension table that updates every six hours still updating?

- Volume asks whether the right amount of data is arriving. Did 4 million rows land last night, the way they do every other Tuesday, or did only 800,000 land?

- Schema asks whether the structure of the data has changed. Did a column get renamed, dropped, or change type without warning?

- Distribution asks whether values look normal. Did the

country_codefield suddenly start producing 12 percent NULLs when it has historically been 0.4 percent? Did therevenuefield gain a row with twelve zeros? - Lineage asks where data came from and where it went. If a metric is wrong, which upstream tables and transformations contributed to it, and which downstream dashboards are now also wrong?

The framing matters because it shifts the question data teams ask themselves. The traditional question is “did the pipeline run?” The observability question is “is the data trustworthy?” Those are not the same question, and the gap between them is where most production incidents live.

Why traditional monitoring isn’t enough

Most data teams already have monitoring of some kind. Airflow alerts when a DAG fails. dbt sends notifications when a test fails. Snowflake or BigQuery surfaces query failures. Slack channels fill up with green checks and occasional red Xs.

The problem is that almost all of this monitoring is binary and operational. A job either ran or it didn’t. A test either passed or it failed. None of it answers the harder questions: did the data that successfully landed actually look right? Did the metric that the dashboard is now displaying drift from its historical pattern in a way that should make a human pause?

The Salesforce schema change in the opening example illustrates this perfectly. The transformation didn’t fail. It silently produced a smaller dataset because a join no longer matched any rows. Every operational signal was green. The only signal that would have caught the issue was a volume drop on the resulting table, compared against its historical baseline, and that’s exactly the kind of signal that observability platforms are designed to surface.

Monte Carlo’s data downtime research has been widely cited for the finding that data engineers spend roughly 30 to 40 percent of their time dealing with data quality issues, and that data downtime, the time during which data is partially or fully wrong, can run into tens or hundreds of hours per quarter at organizations without observability tooling. Whatever the exact number is at your company, the structural point holds: most teams underinvest in monitoring data and overinvest in monitoring infrastructure.

What data teams are actually monitoring in 2026

The category has matured significantly since 2022. Three things have changed.

First, the scope has expanded beyond freshness alerts. Early observability tools were essentially “did this table update on time?” alerting. The current generation of platforms covers schema drift detection, distribution anomalies, row-count anomalies, null-rate changes, and category cardinality changes. Most also integrate with dbt to test on a per-model basis rather than per-table.

Second, lineage has moved from documentation to operational tooling. Five years ago, lineage was something you generated occasionally for a compliance review. Today, end-to-end column-level lineage is what teams use during incidents to determine the blast radius of a problem. When a source column changes, the relevant question is not “what dashboards exist somewhere,” it is “which dashboards consume this column, and which executives are about to refresh them.”

Third, observability has been pulled into the AI conversation. As more teams build retrieval-augmented generation systems, dashboards backed by LLMs, and agentic workflows that consume data programmatically, the cost of bad data has gone up. A wrong number on a slide is embarrassing. A wrong number that an AI agent uses to take an action is a liability. The dbt 2026 report flagged this directly: 71 percent of practitioners are concerned about hallucinated or incorrect data reaching stakeholders, and a majority view validation and oversight as a top governance priority. Observability is one of the load-bearing answers to that concern.

The data contracts question

If you read about observability for very long, you’ll run into the term “data contracts.” The two are closely related but solve different problems.

A data contract is a producer-side agreement: the team that owns the source data commits to a schema, a freshness SLA, and a set of semantic guarantees. If the producer changes the contract, downstream consumers get advance notice and migration support.

Data observability is a consumer-side safety net: even if no contract exists, or even when a contract is broken, monitoring detects the resulting damage in the warehouse before it propagates to dashboards and downstream consumers.

In practice, mature data teams in 2026 are doing both. Contracts prevent a category of incidents at the source. Observability catches the rest. Andrew Jones’s data contracts work and accompanying book is one of the most-referenced resources on the producer side, and it pairs naturally with observability tooling on the consumer side.

The two together are also what enables the “platform as a product” model that’s increasingly common at larger organizations in 2026: a central data platform team publishes contracts, monitors observability signals, and provides reliability targets that other teams can plan around.

Where statistical rigor fits in

Most observability platforms detect anomalies using straightforward statistical tests on metrics they extract from your tables. A row count is compared against its historical distribution. A null rate is compared against its baseline. A categorical distribution is compared using a chi-square test against a recent reference window.

This works well most of the time. It also produces false positives, which is the operational problem that derails most observability rollouts. If every analyst gets paged for every two-sigma deviation in every metric across every table, the alerts get muted within a week.

The platforms that handle this well do two things. They apply false discovery rate corrections so that running thousands of statistical tests in parallel doesn’t generate thousands of alerts at the conventional p < 0.05 threshold. And they distinguish between statistical significance and practical significance, the latter being whether the deviation is large enough in business terms to matter even if it’s statistically real.

QuantumLayers has written about how it applies these advanced statistical safeguards when generating automated insights, including FDR correction and effect size reporting. The same principle applies to observability tooling: the value of an alert is not “this is statistically unusual,” it is “this is statistically unusual and large enough to act on.”

The tools that don’t do this end up training their users to ignore alerts, which is functionally the same as having no alerting at all.

What to look for when evaluating an observability platform

The category is crowded. Monte Carlo, Bigeye, Acceldata, Anomalo, Soda, Datafold, Sifflet, Metaplane, and a long tail of newer entrants all market themselves with overlapping language. The differentiating questions are operational rather than feature-list.

Connection model. Does the platform query your warehouse directly, or does it require an agent or copy of your data? In-warehouse monitoring is generally preferred for security and cost reasons. Tools that require copies of your data create their own data movement problem.

Coverage breadth. Does it monitor only warehouse tables, or does it cover sources, transformations, BI tools, and reverse-ETL targets too? End-to-end coverage is more expensive but catches issues that table-level monitoring misses.

Lineage depth. Column-level lineage is meaningfully more useful than table-level lineage during incidents. A platform that knows that dim_customer.region feeds the executive dashboard’s regional breakdown can tell you exactly what to roll back. Table-level lineage tells you something is broken somewhere.

Alert quality. Ask for a sample of the alerts the platform generated for an existing customer in the last 30 days and the resolution rate. A high alert volume with a low investigation rate is a sign that the noise problem isn’t solved.

Custom monitor extensibility. Out-of-the-box monitors cover the obvious cases. Every team has at least a handful of metrics that need custom logic, and the platforms that let you write SQL-based custom monitors with the same alerting and lineage integration are meaningfully more useful at scale.

Time to first useful alert. The honest version of this question is: from the day we sign the contract, how long before someone on our team gets an alert that prevents a real incident? If the answer is measured in months, the platform is probably configurable in theory and useless in practice.

The bottom line

Data observability is what happens when the discipline of “monitoring infrastructure” graduates into “monitoring data itself.” For data teams in 2026, the category is no longer a luxury or a nice-to-have; it has become a baseline expectation, particularly as more downstream consumers, including AI systems, take action on warehouse data without human review.

The five pillars (freshness, volume, schema, distribution, lineage) are a useful conceptual frame, but the operational reality is that the value of observability depends entirely on alert quality. Tools that flood teams with statistically valid but practically meaningless alerts get muted. Tools that combine FDR-corrected anomaly detection, business-context filtering, and column-level lineage are the ones that actually reduce data downtime.

If your team is starting from zero, start with freshness and volume monitoring on your most-consumed tables, add schema change detection across your sources, and build lineage coverage outward from the dashboards that executives actually look at. The full observability stack is not built in a quarter. But the version that catches the silent Salesforce-renamed-a-column incident from the opening of this article is buildable in weeks, and it is almost certainly worth more than whatever you are currently spending on dashboard tooling.

The dashboard answers the question you asked. Observability tells you whether the answer is true.

Lurika is an independent publication covering data analytics. We are not owned by any analytics vendor.